There is rapidly growing interest in the creation of rendered environments and content for virtual and augmented reality. Currently, the most popular approaches include polygonal environments created with game engines, as well as 360 degree spherical cameras used to capture live action video. These tools were not originally designed to leverage the more complex visual cues available in immersive environments when users laterally shift viewpoints, manually interact with models, and employ stereoscopic vision. There is a need for a fresh look at computer graphics techniques that can capitalize upon the unique affordances that make virtual and augmented reality so compelling. Furthermore, the manual creation of high-quality immersive content is time and labor intensive. To address these challenges, the Illusioneering Lab researches new techniques and technologies for creating lifelike immersive content through the automatic capture and digitization of real world objects, people, and behaviors.

Software

Selected Publications

C. Chen, and E. Suma Rosenberg. ACM Symposium on Virtual Reality Software and Technology, 2020. @inproceedings{vrst2020rendering, title = {Capture to Rendering Pipeline for Generating Dynamically Relightable Virtual Objects with Handheld RGB-D Cameras}, author = {C. Chen and E. Suma Rosenberg}, url = {https://illusioneering.cs.umn.edu/papers/chen-vrst2020.pdf}, year = {2020}, date = {2020-11-01}, booktitle = {ACM Symposium on Virtual Reality Software and Technology}, keywords = {}, pubstate = {published}, tppubtype = {inproceedings} } |

Automatic Generation of Dynamically Relightable Virtual Objects with Consumer-Grade Depth Cameras C. Chen, and E. Suma Rosenberg. IEEE Conference on Virtual Reality and 3D User Interfaces, 2019. @inproceedings{vr19demo2, title = {Automatic Generation of Dynamically Relightable Virtual Objects with Consumer-Grade Depth Cameras}, author = {C. Chen and E. Suma Rosenberg}, url = {https://illusioneering.cs.umn.edu/papers/chen-vr2019.pdf}, year = {2019}, date = {2019-03-23}, booktitle = {IEEE Conference on Virtual Reality and 3D User Interfaces}, howpublished = {Research Demo}, keywords = {}, pubstate = {published}, tppubtype = {inproceedings} } |

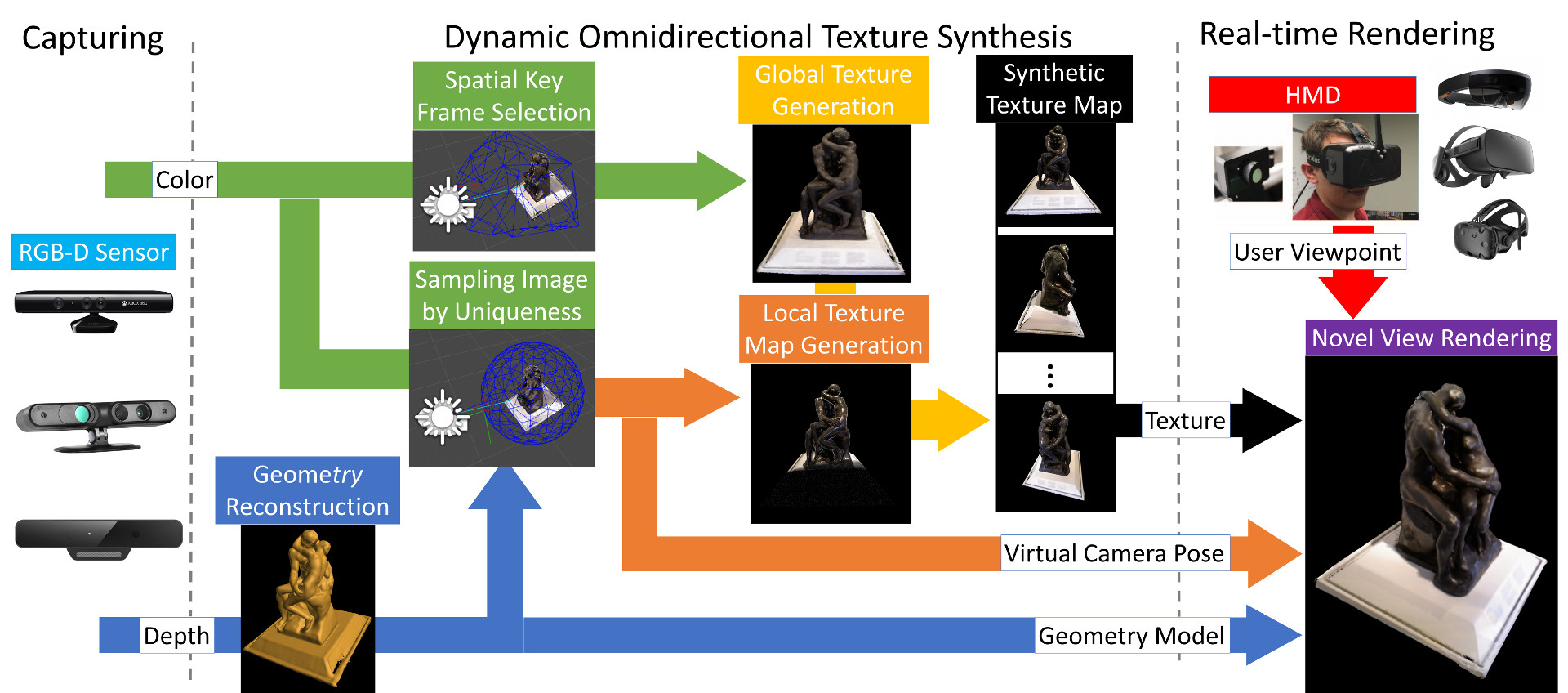

Dynamic Omnidirectional Texture Synthesis for Photorealistic Virtual Content Creation C. Chen, and E. Suma Rosenberg. IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct), 2018. @inproceedings{ismar2018, title = {Dynamic Omnidirectional Texture Synthesis for Photorealistic Virtual Content Creation}, author = {C. Chen and E. Suma Rosenberg}, url = {https://illusioneering.cs.umn.edu/papers/chen-ismar2018.pdf}, year = {2018}, date = {2018-10-16}, booktitle = {IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct)}, keywords = {}, pubstate = {published}, tppubtype = {inproceedings} } |

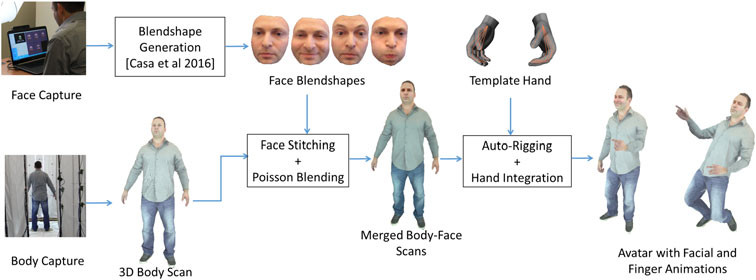

Just-in-time, Viable, 3-D Avatars from Scans A. Feng, E. Suma Rosenberg, and A. Shapiro. Computer Animation and Virtual Worlds, 28 (3-4), pp. e1769, 2017. @article{cavw2017, title = {Just-in-time, Viable, 3-D Avatars from Scans}, author = {A. Feng and E. Suma Rosenberg and A. Shapiro}, url = {http://onlinelibrary.wiley.com/doi/10.1002/cav.1769/full}, year = {2017}, date = {2017-01-01}, journal = {Computer Animation and Virtual Worlds}, volume = {28}, number = {3-4}, pages = {e1769}, keywords = {}, pubstate = {published}, tppubtype = {article} } |

View-dependent virtual reality content from RGB-D images C. Chen, M. Bolas, and E. Suma Rosenberg. IEEE International Conference on Image Processing, pp. 2931–2935, 2017. @inproceedings{icip2017, title = {View-dependent virtual reality content from RGB-D images}, author = {C. Chen and M. Bolas and E. Suma Rosenberg}, url = {https://illusioneering.cs.umn.edu/papers/chen-icip2017.pdf}, year = {2017}, date = {2017-01-01}, booktitle = {IEEE International Conference on Image Processing}, pages = {2931–2935}, keywords = {}, pubstate = {published}, tppubtype = {inproceedings} } |

Rapid Photorealistic Blendshape Modeling from RGB-D Sensors D. Casas, A. Feng, O. Alexander, G. Fyffe, P. Debevec, R. Ichikari, H. Li, K. Olszewski, E. Suma, and A. Shapiro. International Conference on Computer Animation and Social Agents, pp. 121–129, 2016. @inproceedings{casa2016, title = {Rapid Photorealistic Blendshape Modeling from RGB-D Sensors}, author = {D. Casas and A. Feng and O. Alexander and G. Fyffe and P. Debevec and R. Ichikari and H. Li and K. Olszewski and E. Suma and A. Shapiro}, url = {https://illusioneering.cs.umn.edu/papers/casas-casa2016.pdf}, year = {2016}, date = {2016-01-01}, booktitle = {International Conference on Computer Animation and Social Agents}, pages = {121–129}, keywords = {}, pubstate = {published}, tppubtype = {inproceedings} } |

Creating Near-Field VR Using Stop Motion Characters and a Touch of Light-Field Rendering M. Bolas, A. Kuruvilla, S. Chintalapudi, F. Rabelo, V. Lympouridis, C. Barron, E. Suma, C. Matamoros, C. Brous, A. Jasina, Y. Zheng, A. Jones, P. Debevec, and D. Krum. ACM SIGGRAPH Posters, 2015. @inproceedings{siggraph2015poster, title = {Creating Near-Field VR Using Stop Motion Characters and a Touch of Light-Field Rendering}, author = {M. Bolas and A. Kuruvilla and S. Chintalapudi and F. Rabelo and V. Lympouridis and C. Barron and E. Suma and C. Matamoros and C. Brous and A. Jasina and Y. Zheng and A. Jones and P. Debevec and D. Krum}, url = {https://illusioneering.cs.umn.edu/papers/bolas-siggraph2015.pdf}, year = {2015}, date = {2015-01-01}, booktitle = {ACM SIGGRAPH Posters}, number = {19}, howpublished = {Poster}, keywords = {}, pubstate = {published}, tppubtype = {inproceedings} } |

Rapid Avatar Capture and Simulation Using Commodity Depth Sensors A. Shapiro, A. Feng, R. Wang, H. Li, M. Bolas, G. Medioni, and E. Suma. Computer Animation and Virtual Worlds, 15 (3-4), pp. 201–211, 2014. @article{cavw2014, title = {Rapid Avatar Capture and Simulation Using Commodity Depth Sensors}, author = {A. Shapiro and A. Feng and R. Wang and H. Li and M. Bolas and G. Medioni and E. Suma}, url = {http://onlinelibrary.wiley.com/doi/10.1002/cav.1579/full}, year = {2014}, date = {2014-01-01}, journal = {Computer Animation and Virtual Worlds}, volume = {15}, number = {3-4}, pages = {201–211}, keywords = {}, pubstate = {published}, tppubtype = {article} } |